Social Proofing

This project was conducted as part of a broader effort to evaluate strategies for boosting user engagement with vacation rental listings. The project’s objective was to test and analyze the efficacy of social proofing statements in optimizing user engagement.

Intro

Role

Assistant Researcher

UserTesting

Figma

Tools

December ‘24 - February ‘25

3 months

Duration

This research was conducted as a continuation of a previous project to understand the impact of social proofing statements on vacation rental listings. The goal of this research was to continue testing the efficacy of social proofing language to see what effects, if any, they have on keeping potential guests engaged with listings for longer.

My team learned from the first test that users occasionally engage with social proofing statements, but the statements have little to no influence on securing their booking.

For part two of this initiative, we wanted to learn whether different social proofing strategies yield different results.

Background

With a multitude of booking platforms and a vast array of rental properties available to consumers, it’s hard to create a stand-out vacation rental listing, especially in saturated markets. Keeping in mind the ever-evolving landscape of the short-term rental business, we want to ensure performance-boosting strategies will be effective before investing in their development.

Problem

Research Plan

How does including social proofing statements impact users’ perception of and confidence in the listing?

Research Questions

Do users notice these social proofing statements?

Do social proofing statements keep users engaged with the listing for longer?

Methodology

Two unmoderated usability tests testing different social proofing statements

Age 28-65

Annual Income: $40,000+

Device: Smartphone

N = 10

(5 per test)

Recruitment

Employment Status: Employed, retired, unable to work, stay-at-home parent

Analysis

Usertesting’s built-in analysis tools

Deliverables

Slide deck presentation

Research

Two usability tests were conducted on the Usertesting platform to evaluate two distinct variations of social proofing.

Both tests began with screener questions to ensure our test subjects had familiarity with booking vacation rentals.

Screening

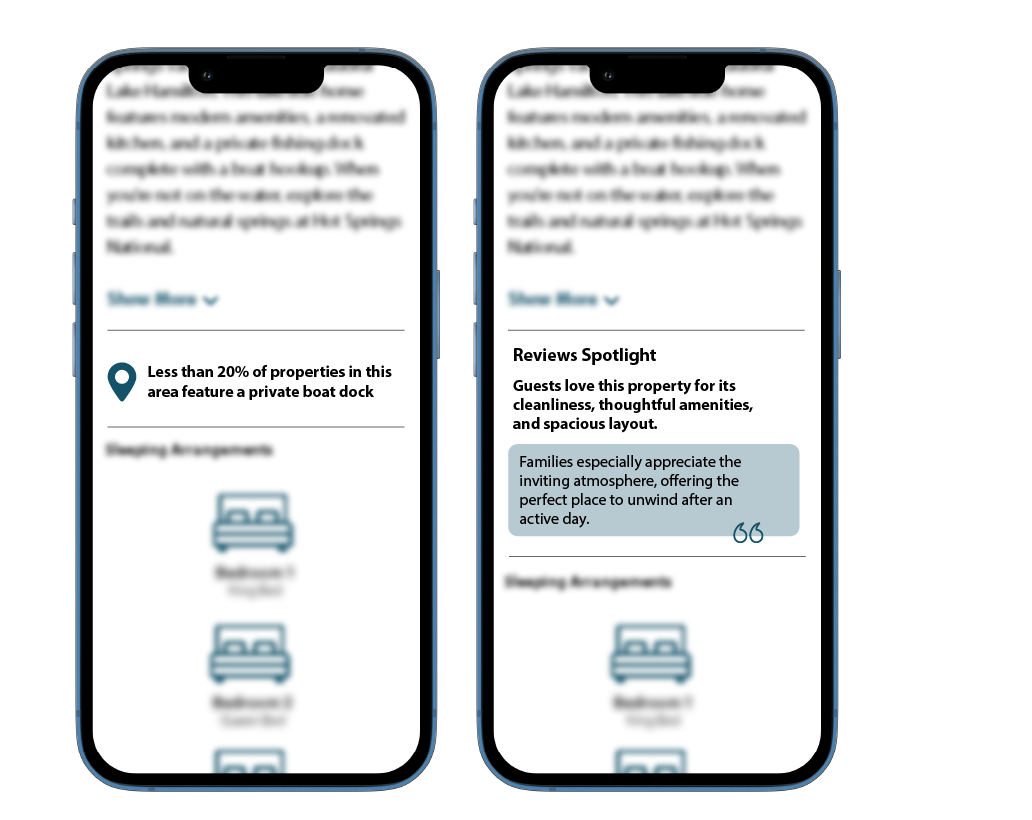

The first test explored the effectiveness of scarcity messaging, a marketing strategy that highlights the limited availability of a specific product (or in this case, amenity) to manufacture a sense of desirability and urgency. For this study, we chose to highlight that our lakefront property is one of only a few other properties in the area with a private dock.

The second test was centered around a reviews spotlight – a short snippet of text that compiled some of the top themes highlighted throughout the property’s reviews. For this study, the verbiage used emphasized the home’s primary audience and welcoming atmosphere.

Testing

When you travel, what type of lodging do you primarily stay in?Accepted:

Vacation rentals

How many times do you book and stay in a vacation rental per year?Accepted:

2-4, 5+

Who is responsible for finding and booking properties for your travels?Accepted:

Self, shared responsibility

I utilized UserTesting’s built-in analysis tools to analyze the qualitative data collected from these test sessions. The sentiment analysis feature helped to easily identify intervals of friction the user experienced while walking through the prompts, and the smart tags feature helped to refine these intervals by labeling the different sentiments expressed by the user.

These tools, combined with an in-depth review of each session’s transcript, allowed me to easily identify trends and generate behavioral and attitudinal insights.

Analysis

Conclusion

Scarcity Messaging Test:

Results from the scarcity messaging test revealed users don’t engage with scarcity messaging and it’s not an effective way to boost interest in a vacation rental property. The scarcity messaging was viewed as informative rather than distinctive. Users also felt the messaging was redundant and that the information highlighted could easily be found elsewhere on the vacation rental listing.

Reviews Spotlight Test:

While users noticed and lightly engaged with the reviews spotlight, they questioned the validity of the highlighted insights and were skeptical of how they were generated. Users wanted to know if the language was AI-generated or handpicked by the homeowner.

Similar to the results of the scarcity messaging test, the reviews spotlight test showed that users found the information redundant. Users noted that, assuming the highlighted reviews were an accurate representation of the listing, they could identify these trends for themselves by reading the reviews.

Findings

Given the lack of engagement exhibited in both tests, the research findings led the team to abandon scarcity messaging and review spotlighting. The team continues to test the efficacy of other engagement-enhancing strategies and is also exploring other avenues of social proofing.

While the two strategies tested were never deployed, the tests still provided valuable insight into how potential travelers engage with vacation rental listings. They underscored the importance of 5-star reviews and how influential they are in a traveler's decision to book a vacation rental. The usability tests also gave us a glimpse into certain navigational behaviors, like how potential guests typically scroll straight down to the reviews before engaging with the listing description.

Outcomes

UserTesting is a great platform to generate quick insights at low costs, but the simplicity it provides can undermine the recruitment process. While users are screened and monetarily incentivized, there is still the risk of recruiting disengaged participants.

While conducting the unmoderated usability tests, I received a couple of sessions where the user either didn’t read the prompts or completed the tasks while engaged in other activities, causing them to miss certain segments. To combat these failed sessions, I added a couple of additional ones in the hopes of attracting more engaged users.

My experience with the UserTesting software has taught me that online research platforms require careful planning and oversight when it comes to recruitment. While not every usability session will be equally as productive, fine-tuning the screener questions may help eliminate less engaged participants.